Evaluating LangGraph applications

You can set up Openlayer tests to evaluate your LangGraph applications in development and monitoring.Development

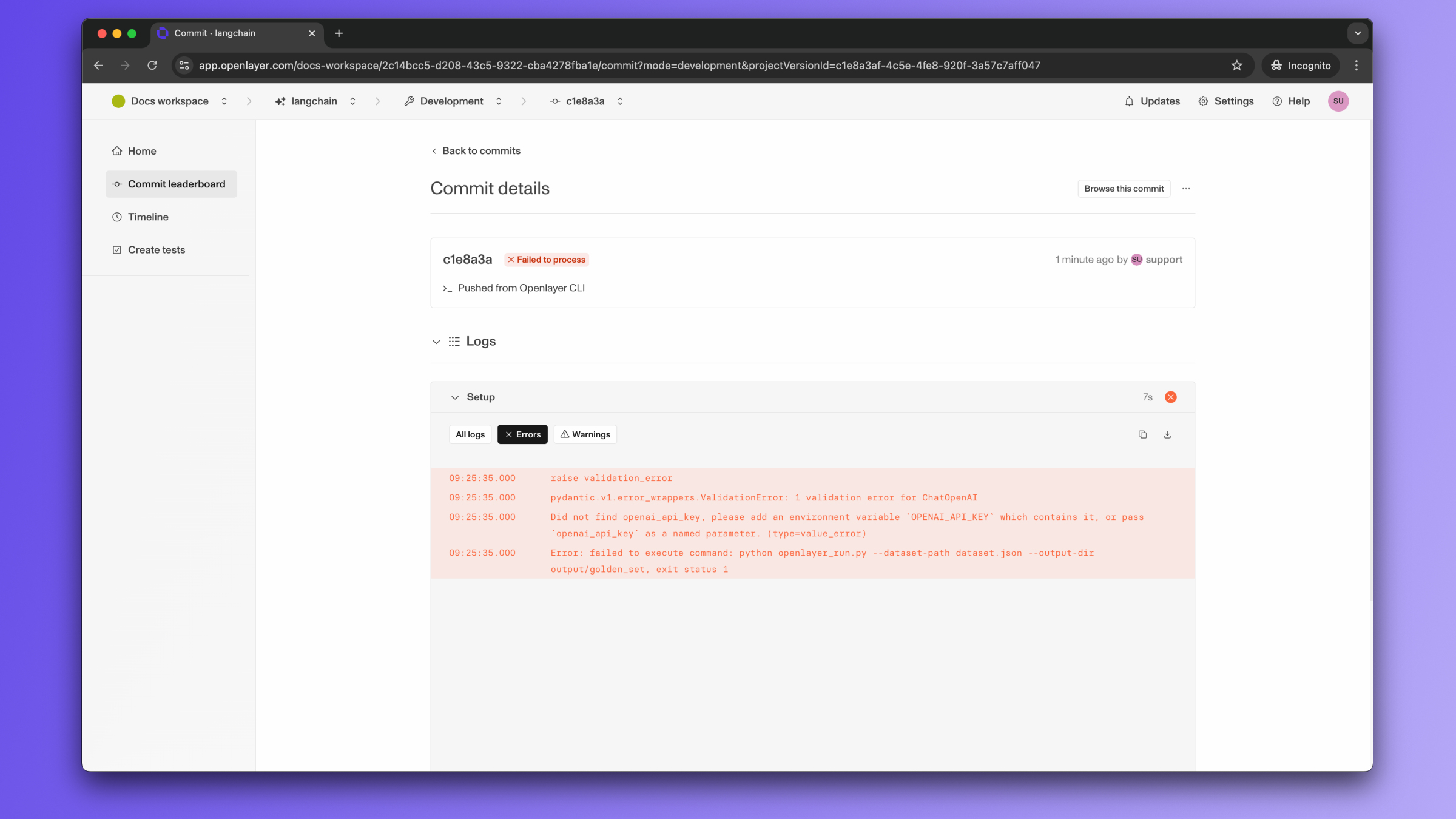

In development mode, Openlayer becomes a step in your CI/CD pipeline, and your tests get automatically evaluated after being triggered by some events. Openlayer tests often rely on your AI system’s outputs on a validation dataset. As discussed in the Configuring output generation guide, you have two options:- either provide a way for Openlayer to run your AI system on your datasets, or

- before pushing, generate the model outputs yourself and push them alongside your artifacts.

OPENAI_API_KEY, if it uses ChatMistralAI,

you must provide a MISTRAL_API_KEY,

and so on.

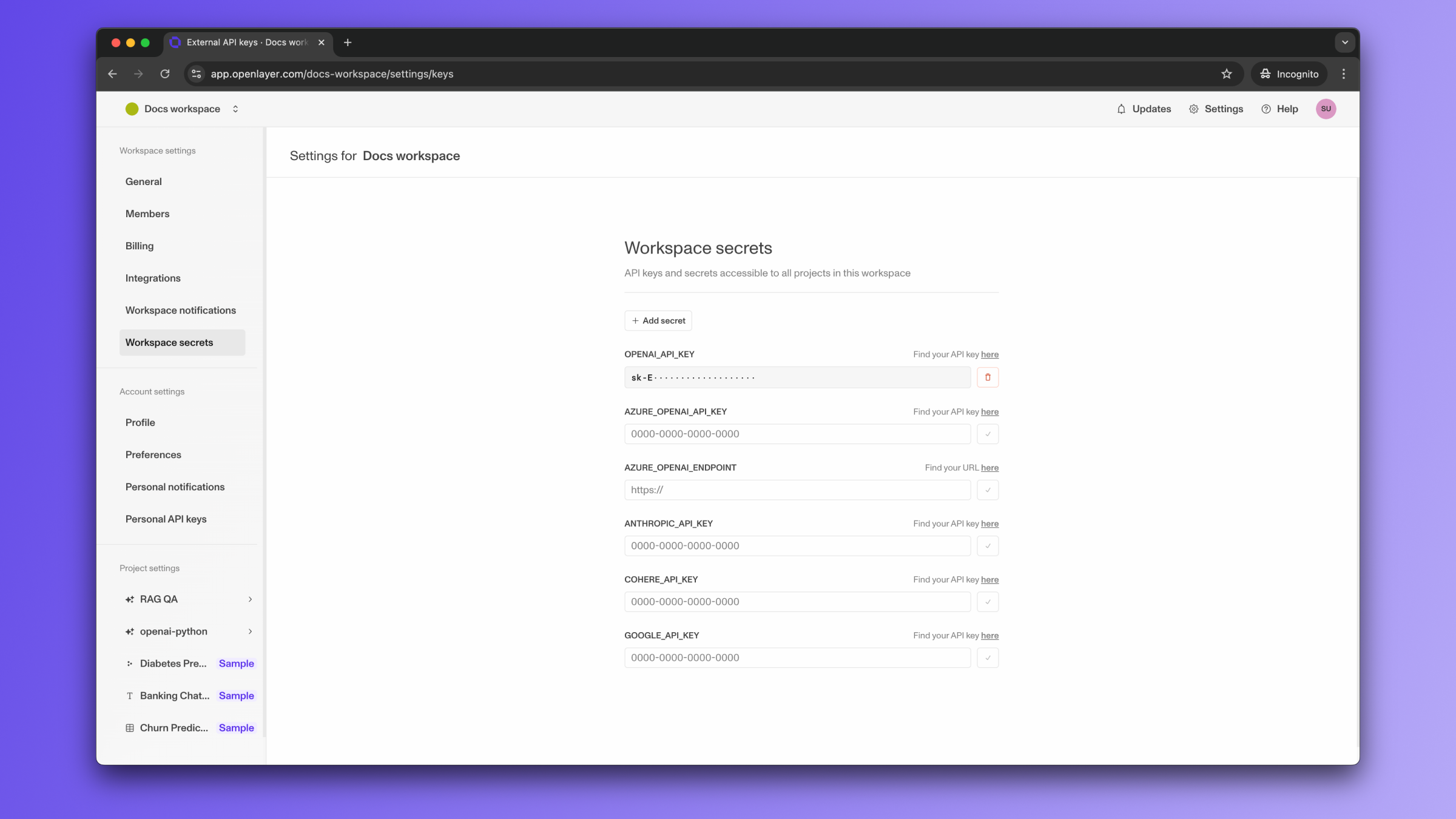

To provide the required API credentials, navigate to “Settings” > “Workspace secrets,”

and add the credentials as secrets.

If you do not see a field for the API credential your application needs, click

the “Add secret” button on the top to add additional secrets.

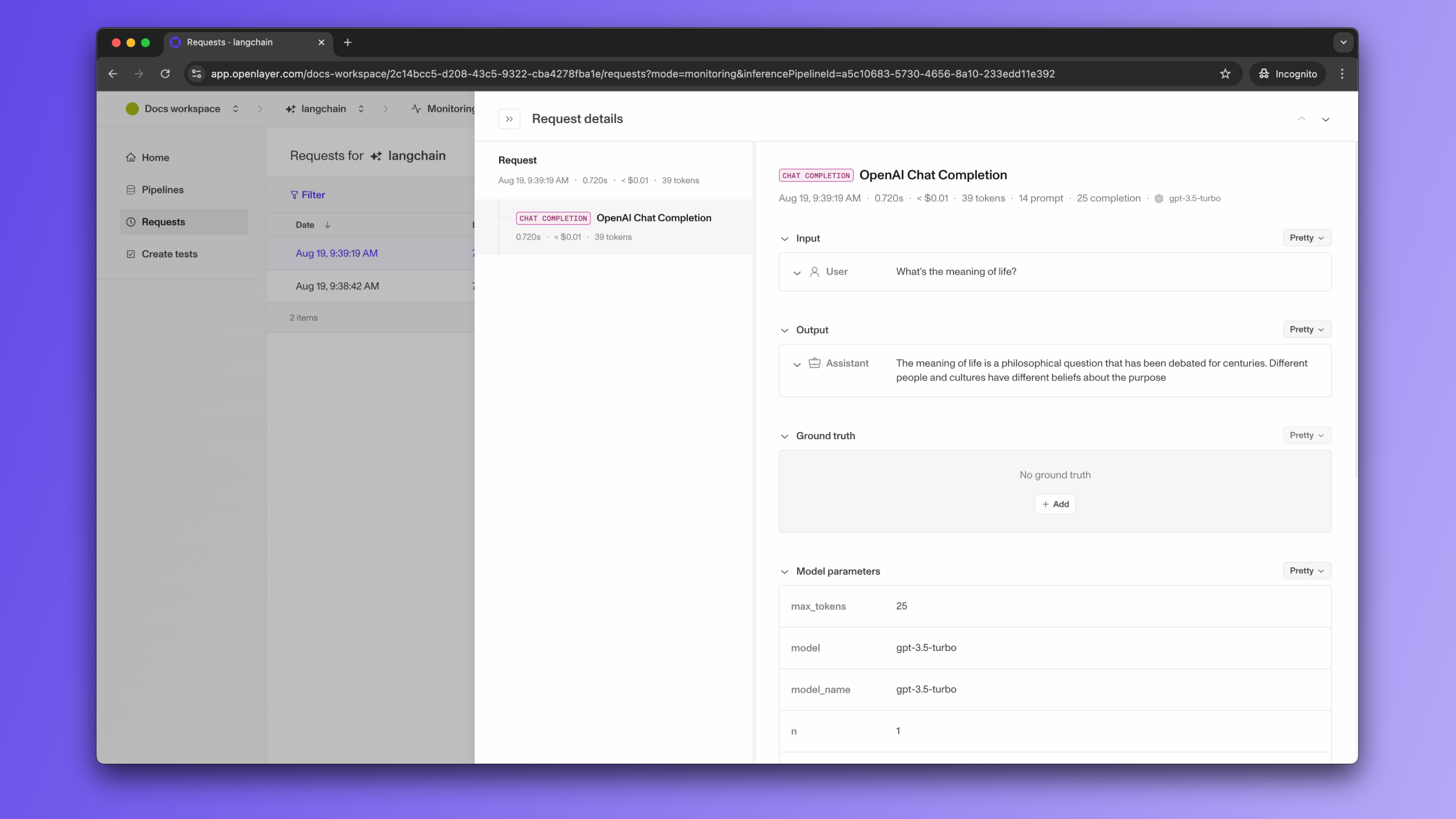

Monitoring

To use the monitoring mode, you must set up a way to publish the requests your AI system receives to the Openlayer platform. This process is streamlined for LangGraph applications with the Openlayer Callback Handler. To set it up, you must follow the steps in the code snippet below:See full Python example

The code snippet above uses builds a simple chatbot. However, the Openlayer

Callback Handler also works for more complex LangGraph applications, including

multi-agent workflows. Refer to the final section of the notebook

example

for a tracing example for multi-agent workflows.

If the LangGraph graph invocation is just one of the steps of your AI system,

you can use the code snippets above together with

tracing. In this case, your graph invocations get added

as steps of a larger trace. Refer to the Tracing guide

for details.